May 2026 Maintenance - Sol Upgrades

This post summarizes the updates and improvements deployed during the May 2026 maintenance window on Sol. Sol received a cluster-wide firmware refresh, an updated kernel and driver stack, a Slurm upgrade with a new job submit plugin, and a refreshed GPU resource naming scheme to improve clarity for users.

GPU Resource Naming

The naming of A100 GPU resources on Sol has been updated to improve readability and make it easier to target a specific class of GPU. MIG (Multi-Instance GPU) functionality, performance, and availability are unchanged; only the resource names that users specify in job scripts have been updated.

New naming convention:

- 20 GB A100 "MIG" slice →

a100.20gb - 40 GB A100 GPU →

a100.40gb - 80 GB A100 GPU →

a100(unchanged for compatibility)

To request a specific GPU type, update your job scripts and interactive commands accordingly:

#SBATCH -G a100.20gb:1

#SBATCH -G a100.40gb:1

Jobs that were pending in the queue specifically targeting the renamed resources will need to be canceled and resubmitted using the new names.

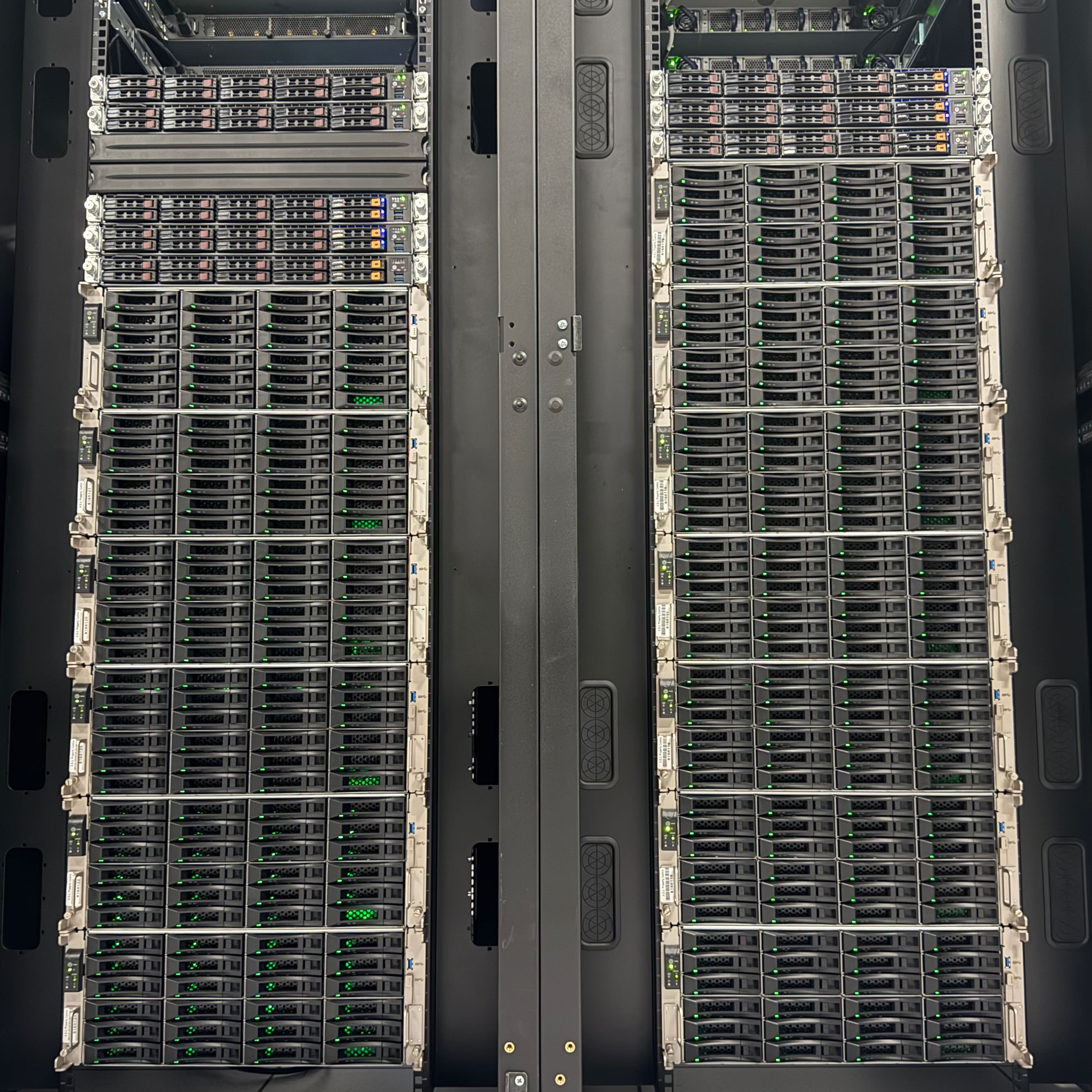

Firmware and Nvidia Driver Updates

Firmware was refreshed across the cluster during this maintenance window. GPU nodes, CPU nodes, and login nodes were all brought current.

As nodes rebooted into the new image, they picked up:

- Latest kernel and security updates

- A custom BeeGFS client patch enabling

relatime(relative access timestamps), matching the change applied to Phoenix in the March 2026 maintenance. This significantly improves detection of active scratch files, reducing false positives when identifying data for removal. - NVIDIA GPU driver updated to

595.71.05to support CUDA 13.2 - ROCm and AMD GPU drivers updated to

7.2.3 - Updated NVIDIA DOCA drivers for ConnectX devices

Slurm Updates

Slurm has been upgraded to 25.11.6 on Sol, bringing in upstream bug fixes and security updates.

Alongside the version bump, a new job_submit.lua plugin was deployed with an accompanying test suite that exercises every condo QOS, ensuring job submission rules behave consistently across partitions.

Partition sets have also been simplified to reduce confusion when selecting where to run jobs, and outdated class accounts have been removed.

GPU Benchmarking

A selected subset of the public A100_80 GPUs were benchmarked with the Nvidia HPC-Benchmarks Container, specifically the HPL benchmark.

| Year | GPUs | Rmax (PFLOPS) | Per-GPU (TFLOPS) | Rpeak (TFLOPS) | Efficiency |

|---|---|---|---|---|---|

| 2025 | 240 | 2.620 | 10.92 | 4,680 | 56.0% |

| 2026 | 224 | 2.617 | 11.68 | 4,368 | 59.9% |

| Δ | −16 | −0.1% | +7.0% | — | +3.9 pp |

The 2026 software/driver stack delivers a measurable efficiency gain over the 2025 baseline.

Technical Updates

- Slurm updated to

25.11.6on Sol - BeeGFS servers updated from

7.4.6to7.4.7 - BeeGFS

relatimepatch applied to Sol clients, improving detection of active scratch files - NVIDIA GPU driver updated to

595.71.05(CUDA 13.2 support) across the cluster - ROCm and AMD GPU drivers updated to

7.2.3 - NVIDIA DOCA drivers updated for ConnectX devices

- Home directories now statically mounted on Sol, improving reliability (

/datadirectories remain dynamically mounted on demand) - Open OnDemand updated on Sol

- Mamba, Jupyter, and Jupyter AI updated to the latest versions

- Obsolete Mamba environments and Jupyter kernels hidden from the OOD interface

- General firmware, kernel, and security updates applied to all systems